Does real-world use of AI in systematic literature reviews result in time and cost savings?

Can AI drive efficiency in systematic literature reviews?

Can AI drive efficiency in systematic literature reviews?

Can AI drive efficiency in systematic literature reviews?

Can AI drive efficiency in systematic literature reviews?

Systematic literature reviews (SLRs) are a resource heavy, time-consuming but necessary part of evidence generation. SLRs present an opportunity for artificial intelligence (AI) to deliver significant resource savings, and several AI tools have been developed to reduce the burden on literature review teams.

Here we examine if an AI-powered SLR platform, EasySLR™, can replace a human reviewer to reduce the time required to complete an SLR without compromising accuracy.

Replacing a human reviewer with AI in an SLR

As the basis of comparison, we used an economic SLR in two very similar breast cancer populations. We tested the ‘AI as a reviewer’ function, which replaces one human reviewer in the SLR process. The transparency of the platform meant that we could examine the decisions and speed of AI and compare them with those of human reviewers in several areas: abstract and full-text screening, data extraction, and development of Preferred Reporting Items for Systematic Reviews and Meta-analyses (PRISMA). To measure accuracy, we used the final decision from the human reviewers as the ‘correct’ decision, against which the AI decision was then compared. Lastly, we compared the time required to complete an SLR across projects both with and without EasySLR™.

AI-powered analysis shows similar accuracy to humans across SLR tasks

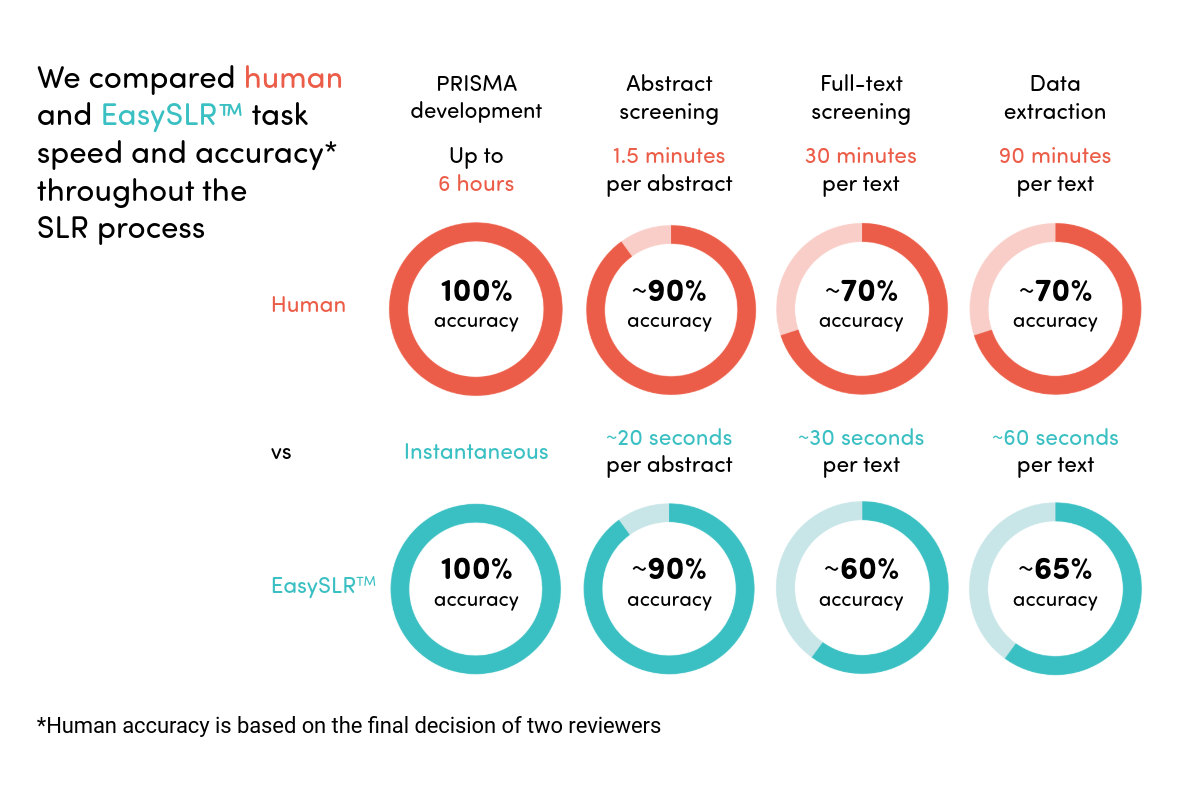

Across PRISMA generation and abstract screening, the AI platform performed with accuracy comparable to that of human reviewers (Figure 1). For full-text screening and data extraction, platform accuracy was ~10% lower than human accuracy because AI relied too heavily on identifying exact wordings from the population, intervention, comparator and outcome (PICO) criteria and struggled to extract complex data such as some economic model assumptions and costs.

Figure 1. Accuracy and speed comparison between human reviewers and an AI-powered platform, EasySLR™, at each step of the SLR process

Time savings of ~40% are possible in the real world, but there are caveats

The AI platform outperformed humans in speed for all tasks, saving ~40% of the time needed to complete the SLR tasks compared with humans. The time savings were particularly notable when we needed to explore sub‑populations or adjust the PICO and re‑run the searches – without AI this would have effectively required conducting a separate SLR. Once the parameters were established in EasySLR™, however, refining the PICO criteria and re-running the screening became quick and straightforward, taking a fraction of the time.

One caveat is that the extent of time savings can vary depending on the type of project (systematic versus targeted review), the complexity of the SLR question and the reviewers’ familiarity with AI prompting. Some of these complexities could be mitigated by using AI tools that improve prompting, optimize protocols and train AI in human decision making, and therefore drive even greater time efficiencies in AI‑powered SLRs.

Conclusion

In conclusion, an AI-powered platform, EasySLR™, can replace a human reviewer and save up to ~40% of the time needed to conduct an SLR. Utilizing AI in SLRs can significantly reduce the human workload required, but limitations such as reduced AI accuracy still exist. To ensure that AI‑driven time savings never come at the expense of accuracy, new features that strengthen AI understanding will be essential across all AI SLR tools as they continue to evolve.

About the authors

Can AI drive efficiency in systematic literature reviews?

Can AI drive efficiency in systematic literature reviews?

Contact CTA

Start a conversation

Find out how our experts can address your healthcare communication challenges.