Can AI reliably replace human reviewers in literature reviews?

Can AI reliably replace human reviewers in literature reviews?

Can AI reliably replace human reviewers in literature reviews?

Can AI reliably replace human reviewers in literature reviews?

It is predicted that the EU Joint Clinical Assessment (JCA) will require significant time and resource investments from countries and manufacturers, including the crucial development of systematic literature reviews (SLRs) to inform JCA dossier completion. Based on the current JCA guidance, these SLRs are predicted to have up to 18 different population, intervention, comparator, outcome (PICO) criteria and 720 analyses per required indication, a stark increase from the one PICO/analysis required by current methodology. With this exponential increase of SLR work comes the requirement for a new approach that can manage the volume and resource requirements under short timelines. We examined whether current artificial intelligence (AI) technology can achieve this and what the process/time savings may be.

We tested whether AI could save time on key SLR tasks, how accurately it performed these tasks and whether it could replace humans or otherwise reduce their workload. The key SLR tasks were:

- Defining the PICO criteria

- Developing a search strategy

- Abstract screening

- Full text screening

- Data extraction

- Data checking

We conducted each of these tasks using AI in parallel to the existing (human) process and recorded time and accuracy differences. We chose the large language model (LLM) ChatGPT as a representative AI tool for this task, owing to its ease of use, ability to perform all the key SLR tasks, wide availability, and our good understanding of its functionality.

PICO criteria and search strategy

As a first step, we asked ChatGPT to define the PICO criteria for the literature review based on a clear research question. Although the criteria it produced were a good starting point, they were frequently too general and required considerable human editing. In our example PICO, ChatGPT struggled to identify methodological outcomes and criteria with enough nuance to answer the question. We then prompted ChatGPT to produce a search strategy based on a specific research question and PICO criteria. ChatGPT was able to produce a plausible search strategy, but on closer inspection the search included fabricated Medical Subject Headings (MeSH) terms and when run, the strategy returned 0 results compared to the 217 results from the strategy developed by a human.

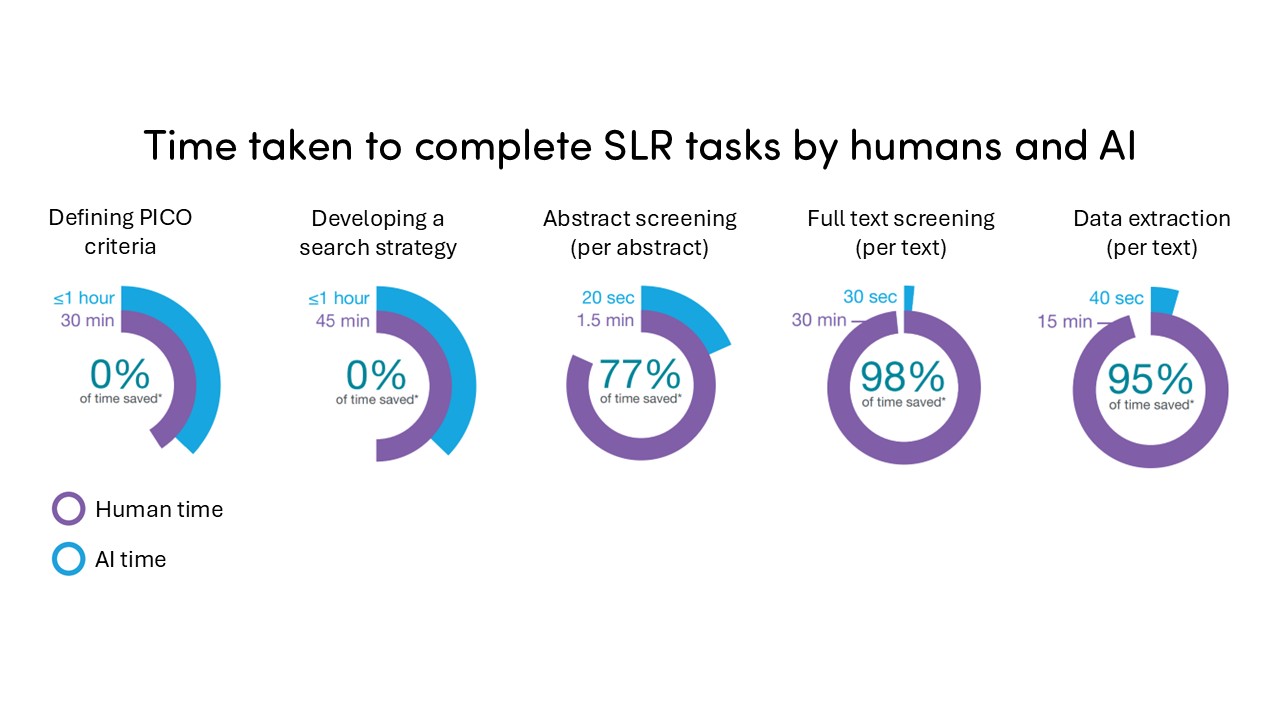

Despite these errors, ChatGPT produced both PICO criteria and an initial search strategy in ~30 seconds each. It took the human reviewer ~30 minutes to develop the PICO criteria, and ~45 minutes to develop the search strategy (see Figure 1). While it was quicker upfront, the ChatGPT PICO criteria and search strategy required significant fine-tuning by a human to make them fit for purpose, taking ~30 minutes to an hour to review and correct. Therefore, while a useful first step, ChatGPT could not replace a human based on accuracy, and due to the time taken to correct and refine, there were minimal time savings compared to a human developing the PICO criteria and search strategy from scratch.

Figure 1: Time taken to complete SLR Tasks by human and AI

*Time saved is based on one reviewer; another reviewer is required at each stage to satisfy methodology

Abbreviations:

AI: artificial intelligence

SLR: systematic literature review

PICO: population, intervention, comparator, outcome

Abstract and full text screening

We uploaded the abstract/full text into ChatGPT and asked it to confirm whether the document fitted the PICO criteria. ChatGPT performed abstract screening in 20 seconds compared to the ~1.5 minutes a human needed to complete the task. For full text screening, ChatGPT performed this task in just 30 seconds compared to ~30 minutes for a human reviewer (see Figure 1).

Despite the time savings, the results produced were not always accurate. Compared to the human reviewer, ChatGPT failed to correctly screen 20% of texts, both at the abstract and full text screening stage. However, when prompted to double check whether the incorrectly included/excluded texts fitted the PICO criteria, ChatGPT could eventually screen them correctly. Some PICO criteria were perceived by ChatGPT to be more ambiguous than others, and it was less accurate at including/excluding correctly – these required human intervention to ensure the texts were screened appropriately. In our study, ChatGPT was equally successful at screening inclusions and exclusions.

The SLR methodology demands that two reviewers screen all abstracts/full texts and reach consensus on inclusions/exclusions. Due to the relative accuracy of ChatGPT, it could be used to replace one human reviewer, with the other reviewer screening all abstracts/texts and reaching consensus to satisfy the SLR methodology. Such modifications to the SLR methodology would need to be agreed ahead of time with JCA assessors, but if applicable, could produce significant time savings.

Data extraction

We uploaded full texts into ChatGPT and asked it to extract seven specific methodology data points from each. ChatGPT completed this task in ~40 seconds for all data points per full text compared to 15 minutes taken by the human reviewer (see Figure 1).

ChatGPT accurately identified 81.4% of the seven methodology data points we asked it to extract from the full texts; however, the accuracy of data extraction in the literature ranges from 41.2% to 100%. We found that ChatGPT was more accurate identifying data points such as the trial name, type of trial, trial locations and treatments investigated, but less accurate identifying exclusion criteria and durations patients were given specific treatments. Similar findings have been reported in the literature, with AI struggling to extract more complex data points, such as inclusion criteria, odds ratios and 95% confidence intervals, and figures or tables. While the extracted data in our sample still required checking by a human to satisfy SLR methodology, and it needed to be manually copied into an extraction spreadsheet, our study showed that ChatGPT can reliably halve the human workload in some extractions.

AI use in practice

In August 2024, the National Institute for Health and Care Excellence (NICE) released its position statement on the use of AI in evidence generation, highlighting that if companies wish to employ AI they should engage NICE proactively, both in early engagement and with appropriate technical teams in later stages of evidence generation. All AI methods should undergo technical and external validation and the results should be presented to NICE. While it is acknowledged that AI could be time and resource saving, additional engagement and review steps requested by NICE may negate some (or most) of the savings seen in our study and the literature. JCA has so far not provided a position statement on the use of AI. If it follows NICE’s lead, it could formalize the requirements and timelines for AI incorporation and clarify what time and resource savings manufacturers can expect.

It is clear that AI can provide numerous benefits to the SLR process, particularly by increasing the efficiency of time-consuming tasks, although it is not yet autonomous and requires human oversight. The JCA process promises the development of numerous PICOs and SLRs to satisfy the needs of every country, tasks where AI, once optimized, could as much as halve the workload. Guidelines around practical AI use in the SLR space are urgently needed for manufacturers and countries to understand how these advancements can be harnessed. Other tools, such as natural language processing (NLP) and retrieval-augmented generation (RAG) models, are also being trialled and are expected to produce even better results in this space.

Can AI reliably replace human reviewers in literature reviews?

About the author

Can AI reliably replace human reviewers in literature reviews?

Can AI reliably replace human reviewers in literature reviews?

Contact CTA

Start a conversation

Find out how our experts can address your healthcare communication challenges.